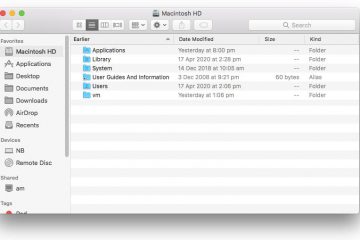

Redirecting home folders to OneDrive, Dropbox or Google Drive on macOS

Under Windows, the process of redirecting a user profiles home folders aka ‘special folders’ or ‘known folders’ such as Documents,[…]

Read moreMinistry of Everything

Under Windows, the process of redirecting a user profiles home folders aka ‘special folders’ or ‘known folders’ such as Documents,[…]

Read moreWhen using a QNAP backup target to backup macOS 10.14 and/or Windows 10 over SMB, you may experience frequent problems[…]

Read morePi-hole is a DNS-based ad and tracking blocker. Originally intended to run on a Raspberry Pi, Pi-hole can itself run[…]

Read moreIf you have attempted to use the Games section in Kodi 18, you may have run into the issue of[…]

Read more